If AI keeps giving you bland writing, obvious ideas, and work you still have to rescue, the problem usually is not the model.

You haven’t really trained it, briefed it, or given it enough context to do the job well. You’ve been throwing work at it and hoping for the best.

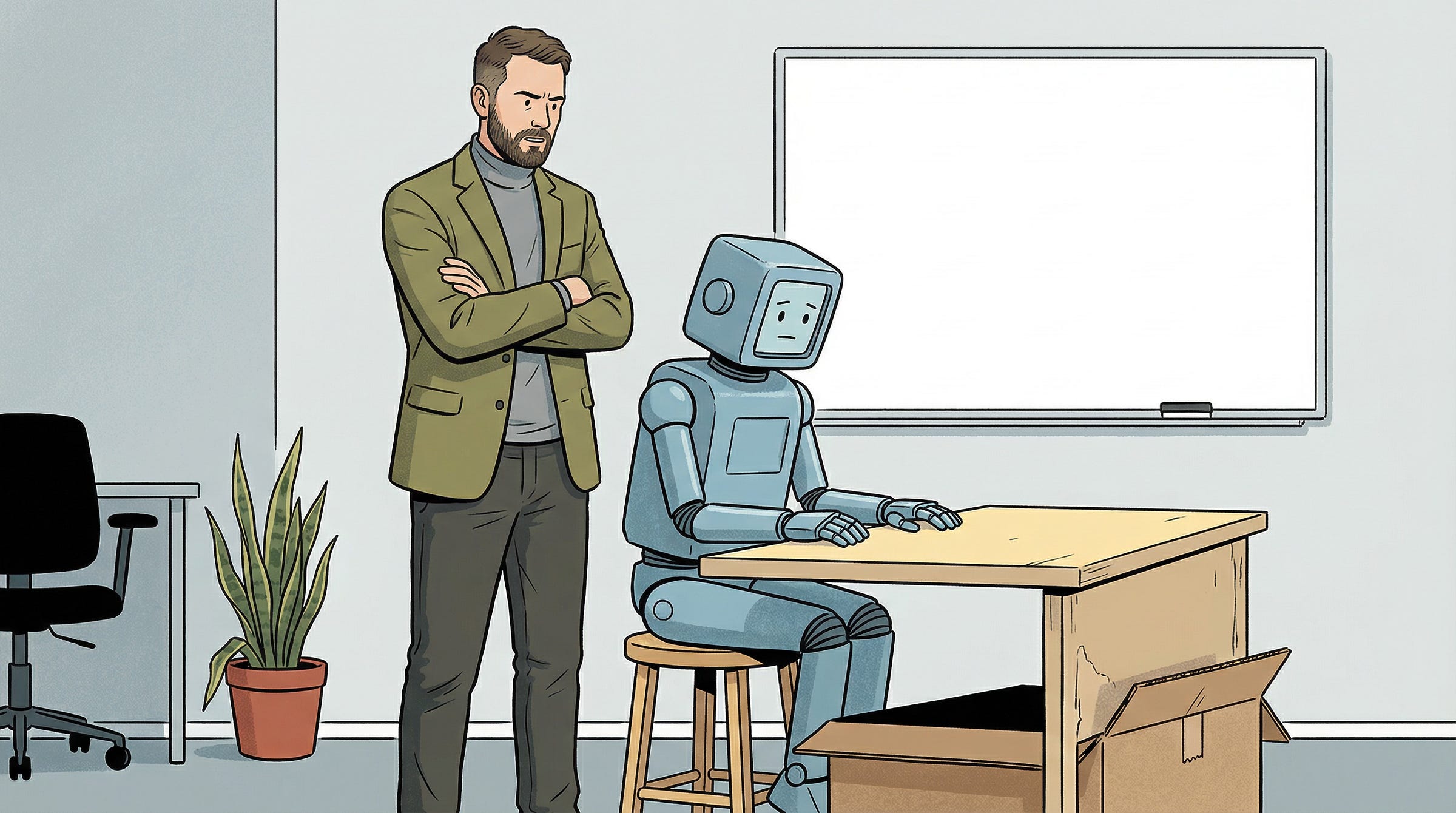

You wouldn’t onboard a human hire this way. AI is no different.

To get real value from it, you need to treat AI more like staff and take the time to train it properly. That’s the point Millennial Masters guests keep coming back to.

A better question is what a new hire would need to know in order to do this well. Ask it that way and the mistake becomes obvious. 👇🏻

You skipped the onboarding

Carly Meyers explained it in the clearest way I’ve heard: “Think of it like one person. Think of it like you’ve just hired someone.

“Say you just hired a sales and marketing person. They’ve walked into your office for the first time. You sit them down and say, right, this is what I want you to do.”

That’s the part most founders skip. They don’t explain the business properly or spell out who the customer is.

They also don’t show the AI what the brand sounds like, what counts as good work, what needs approval, or where it should stay in its lane.

They start with tasks, which is the same mistake people make with new hires when they are moving too fast. Things get messy quickly.

That’s not new. Strong hires still need context, and good employees take time to become useful. Throw someone into the middle of the business without proper onboarding and the work usually comes back half-baked.

Then AI gets treated as if none of that applies. The expectation is close to finished output on day one, and when it disappoints, the tool gets the blame.

This is a management problem

Damon Flowers was direct: “AI is like a staff member and it can do anything. It can do finances, it can do marketing, it can have a conversation with you and negotiate stuff.

“But you’ve got to be really good at training it around who it is, what’s the role, what context you’re giving it, and what do you specifically want it to go do for you.”

That’s the shift a lot of founders still haven’t made.

If AI is sitting inside your workflow like a member of staff, the output depends on the job you have given it and how clearly you have set it up.

If that setup is vague, the output usually comes back vague too. You get writing, research, strategy, and sales copy that could belong to almost anyone.

Jess Jensen made the same point: “I think of AI as almost like a junior employee. The more that I train and invest in that junior employee to get them to understand our strategy, our goals, our vision, who I am, the better the output’s going to be because now they’re really tracking with me.”

That’s a much better approach than treating AI like a one-shot tool. The quality improves when it starts following how you think and the standards you work to.

Open a fresh window, type a request, get something back, and judge the whole experience on that one exchange. Of course the output will disappoint.

There’s no real context, no memory worth using, no examples from previous work, and no proper explanation of how the business operates.

Ryan Carruthers described AI as “a marketing or copywriting assistant right now… like having a graduate, someone who’s just getting into it, who’s starting to learn the frameworks.”

That’s useful because it brings the expectation back down to earth. A graduate can help and save you time. They can also waste a lot of time if you expect them to understand the business from one instruction and a few scraps of documentation.

You’re expecting senior output from something you barely trained, then blaming the tool when it cannot deliver.

Give it a job first

Start by getting clear on the role. A lot of AI use is still far too broad.

If you ask it to be a strategist, researcher, editor, analyst, and operator all at once, it ends up being none of them properly. If you want better work, define exactly what role it is supposed to play in the business.

That might mean a copy assistant, a research analyst, a sales support function, a coding reviewer, or an editorial second pair of eyes. The more precise the role, the easier it becomes to train well.

Carly Meyers’ framing helps here. One “person” does one type of work. The AI is no longer guessing what it is there to do.

The practical test is simple. If a human joined your team tomorrow and got this exact brief, would they know what role they were in and what good looked like in that role? If the answer is no, the AI will struggle too.

Once the role is clear, context starts doing most of the heavy lifting. A lot of people cut corners here because giving context feels slower than prompting. Skip it and the quality usually falls apart.

Nick Holzherr described AI as “a distributed team member,” which is useful because anyone working outside the room needs clear documentation to do the job properly. Nobody expects remote employees to just pick all of that up on their own.

It has to be written down somewhere, with examples and enough detail for someone to understand how the company operates. AI is no different.

That’s why businesses with decent documentation and defined processes often get stronger results from AI sooner. The material needed to train it already exists.

If everything is still sitting in your own head, the output is usually worse because the AI is being asked to work inside a business that has never been clearly articulated.

Teach it what good looks like

You get weak output when you expect AI to pick up voice and standards from nothing. Give it a clear sense of how the business sounds at its best.

That means showing it strong examples of how you write, sell, and communicate, along with the kinds of phrasing or claims you do not want it using.

This matters because voice is not decoration. In a lot of businesses, it carries judgement. It tells you whether something feels salesy, technical, or generic. Leave that undefined and the model usually fills the gap with average internet sludge it’s been trained on.

Yota Trom uses AI as an editor rather than a creator, saying it helps her because she’s not a native speaker. That’s a useful boundary. It gives the AI a clear job and makes it easier to see what should still stay with the human.

The same thing applies to the goal of the task. If you ask for writing, research, summaries, or strategy thinking without explaining what the work is supposed to achieve, the AI has to guess what good looks like, and the output usually gets weaker.

When the task has a clear purpose, the output usually improves. AI is no different.

Constraints matter too. If it cannot make claims without sources, say that. If it should not sound too polished, say that. If it needs to protect margin, stay within brand language, or avoid legal overreach, make that clear as well.

You don’t need a perfect instruction sheet for every task. You need to stop expecting AI to know the business better than the person using it.

This is where it gets useful

Don’t ignore this part because it is less exciting than trying a new tool.

The quality improves when you start correcting the work and letting that shape the next round. That is how a useful working relationship develops, whether it is a junior hire or a custom GPT.

Jess Jensen’s point about investing in the junior employee matters here. It takes repetition, correction, and enough patience to build something better over time instead of opening a fresh session and starting the whole disappointment cycle again.

What looks like the model getting smarter is often just better management. That is where the gains stop being theoretical.

Once the system is trained properly, more first-pass work gets handled before a human steps in. Drafts come together faster, research is easier to pull together, and more of the admin-heavy work gets moved off people’s plates.

James Augustin thinks about AI automation as taking the admin-heavy parts of a role away so people can focus on the work they are actually good at and paid for.

Ryan Carruthers gave a similar example when he described building an AI that could handle after-hours sales work, gather the right information, and book calls so nobody on the human sales team had to keep carrying that burden late into the evening.

Damon Flowers sees it as getting work to 85 to 95% done before a person even touches it. That number will vary by task, but the principle is right.

At that point, you are reviewing and refining far more often than you are starting from scratch. That’s where the time starts coming back.

The real mistake you are making

AI is starting to expose which businesses are systemised and which still live mostly in the founder’s head.

Weak output comes from shoddy setup. The role is vague, the context is thin, the standards live in your head, and then the tool gets blamed for sounding half-baked.

You’re expecting too much from something you’ve barely trained.

That’s why this is more fixable than you think.

You don’t need a better model to get better results. You need to brief it clearly, train it properly, give it feedback, and hold the standard.

Do that and you’ll get more from AI than most people complaining about it ever will.

The last takeaway nails it - exposing who has a set of systems & processes, and who’s mostly just winging it.

I think about it in terms of the “RCGFE Prompting Framework”

Role of who the LLM needs to emulate

Context around the ask

Goal of what you’re trying to achieve

Framework of mental model guiding what to focus on & ignore

Example of what good looks like

You don’t need longer prompts, just clarity

The quality of output usually reflects the quality of input, and treating AI like a collaborator instead of a shortcut is where the real leverage starts to show